Why enterprise AI projects fail in regulated industries

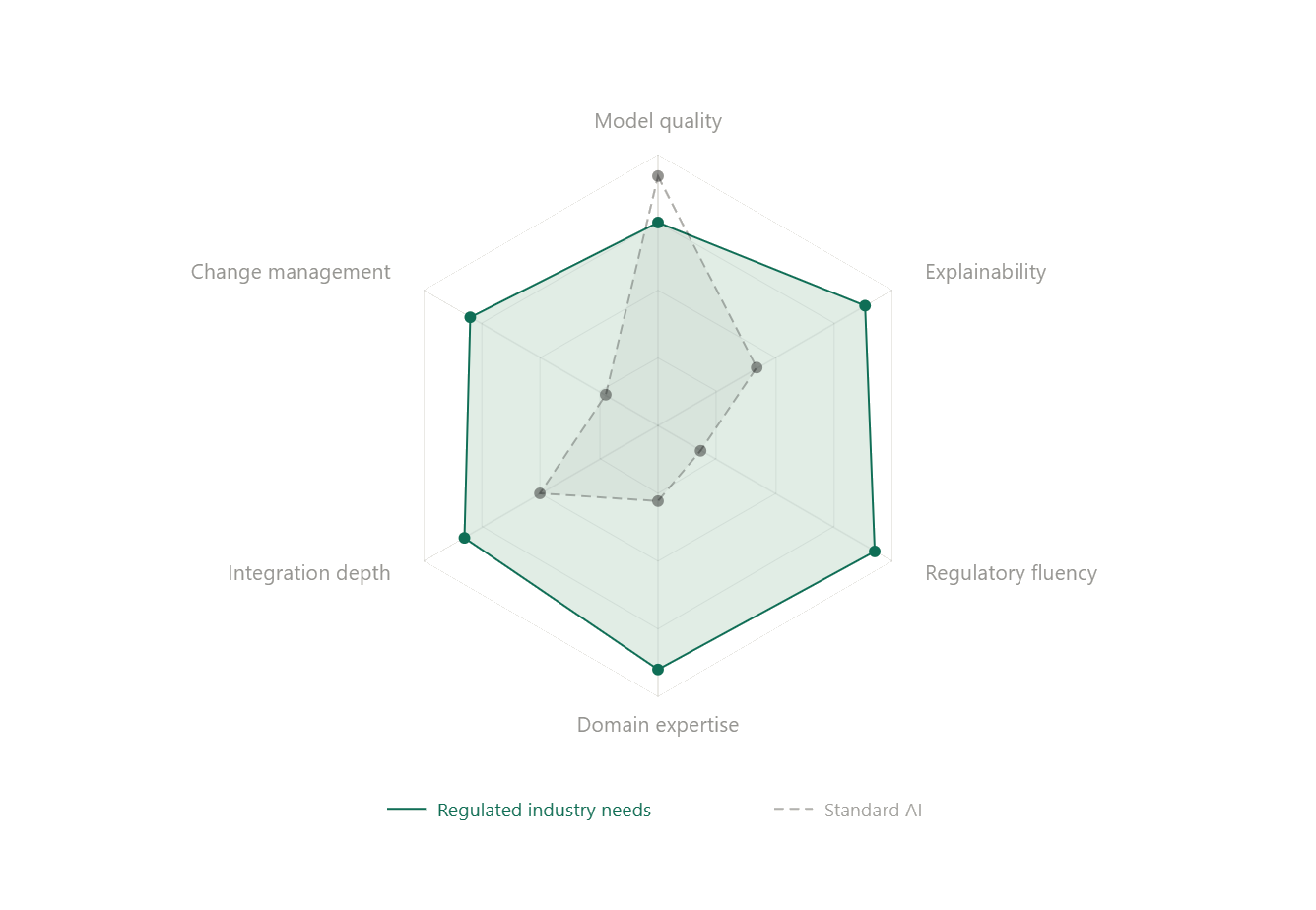

Most failures aren't about models. They're about the environment those models are dropped into — and the mismatch between how AI is built and how regulated industries actually operate.

AI systems are built assuming flexibility: iterate fast, retrain often, optimize continuously. Regulated industries are built on the opposite: stability, traceability, and strict control over change.

That mismatch is where things break. What works in isolation rarely survives in production.

Over time, we’ve seen a consistent pattern of failure modes emerge:

The Eight Failure Modes

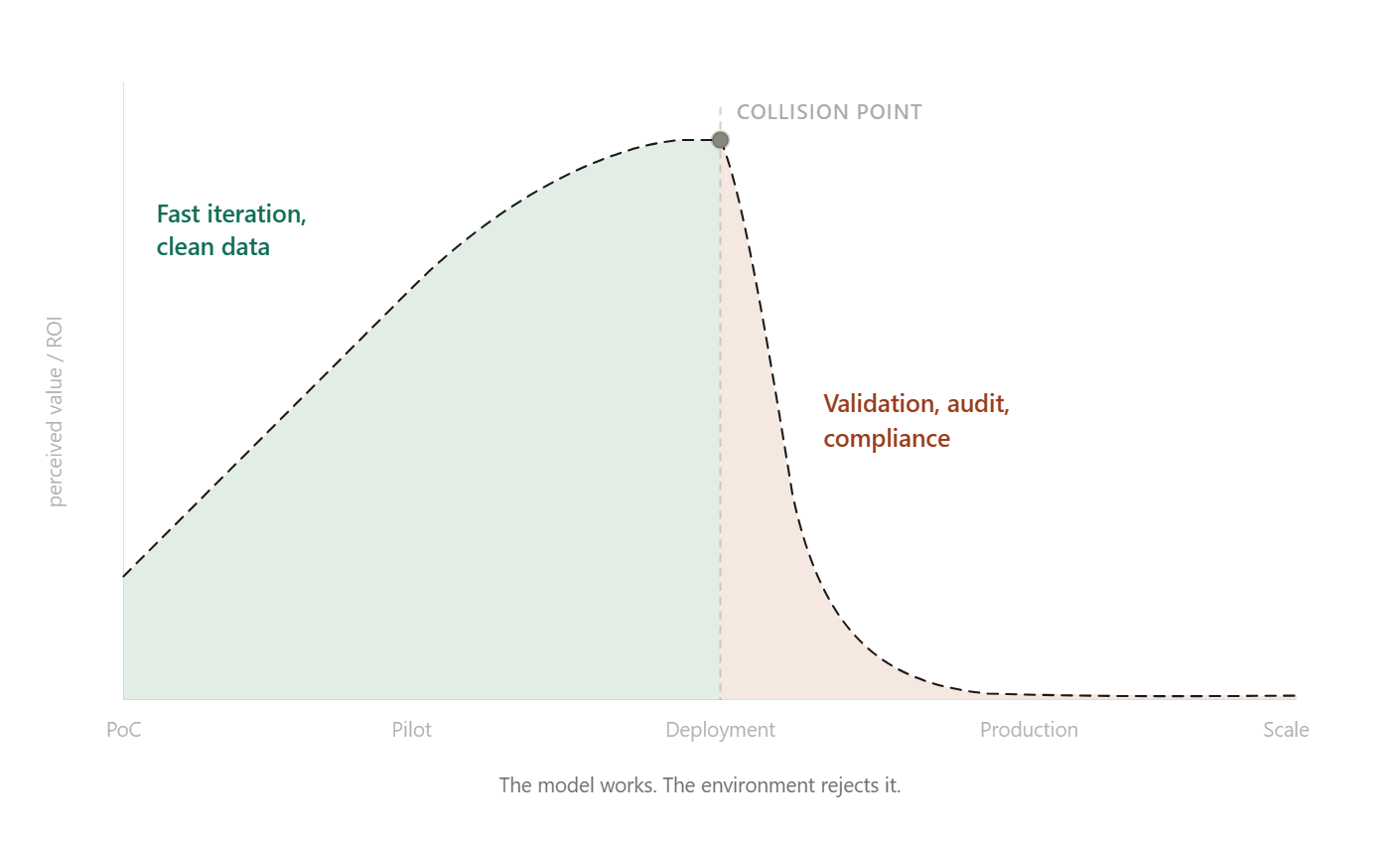

01. The PoC Illusion

Proofs of concept are built in clean, unconstrained environments.

Production is not.

Once deployed, models must integrate with legacy systems, pass validation protocols, and comply with regulatory standards.

The result: expected ROI gets absorbed by integration and governance overhead.

02. No defensible validation

In regulated environments, "it works" is meaningless.You need to prove why it works, how it behaves over time, and how every decision can be audited. Here, AI Explainability plays a key role.

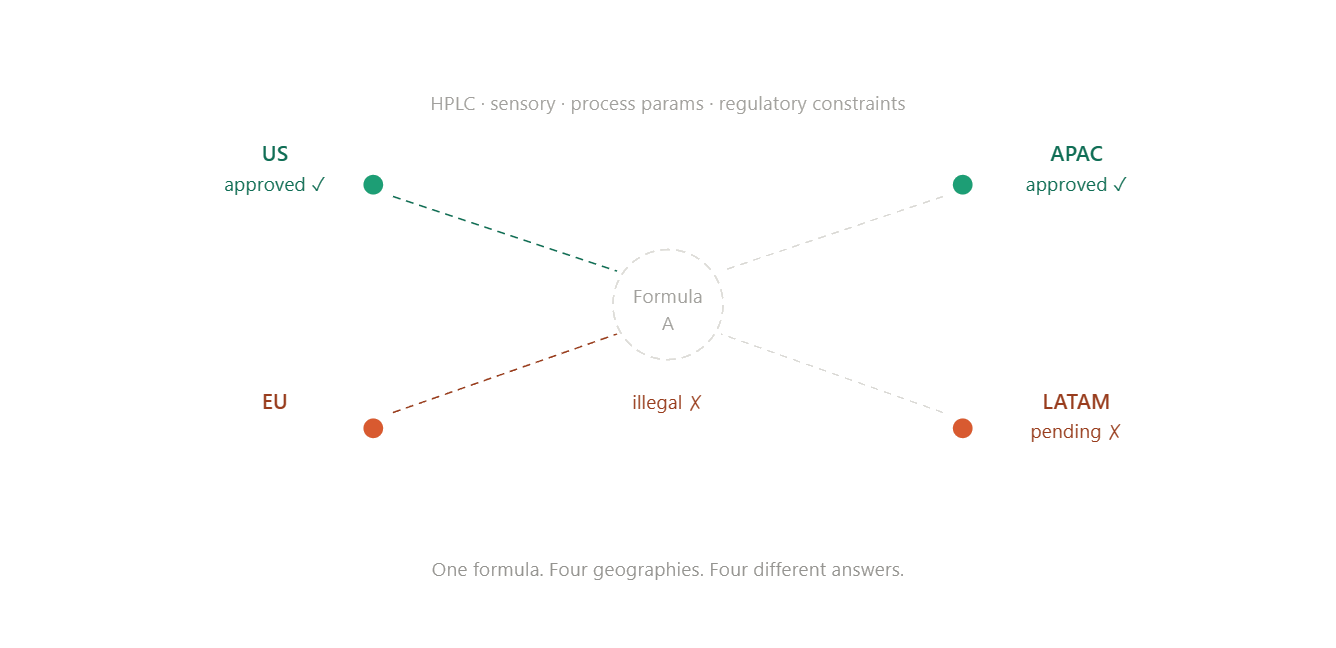

03. Underestimating domain complexity

In industries like food, pharma, or chemicals, data is not just text or tables. It's analytical measurements, sensory data, process parameters, and regulatory constraints, often different across geographies.

A formulation that works in one market may not be legal in another. Models that ignore this complexity fail quickly.

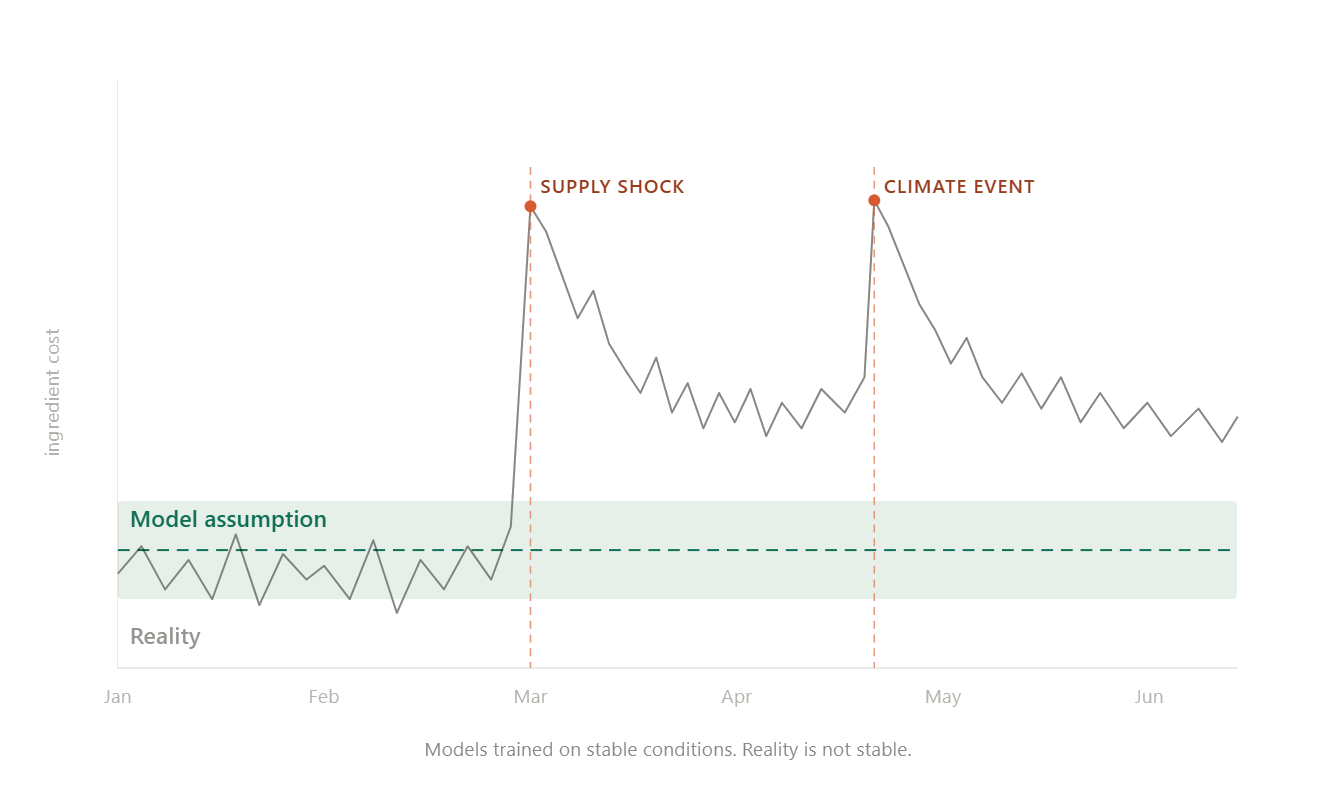

04. Ignoring real-world volatility

Models are trained assuming relatively stable conditions. Reality is not. Ingredient costs fluctuate. Supply chains break. Climate events make key inputs unavailable. If your system cannot adapt to that variability, it is useless.

05. Closed systems in open environments

Many AI platforms assume they control the stack. Enterprises don't work that way. Clients need systems that can ingest their proprietary data, connect to their infrastructure, and even incorporate their existing models. Not because those models are perfect, but because organizations cannot afford to discard years of prior investment.

06. Security and deployment mismatch

If your solution requires sensitive data to leave the client's environment, adoption will stall. Regulated companies expect in-tenant deployments, audit trails, and full control over data access.

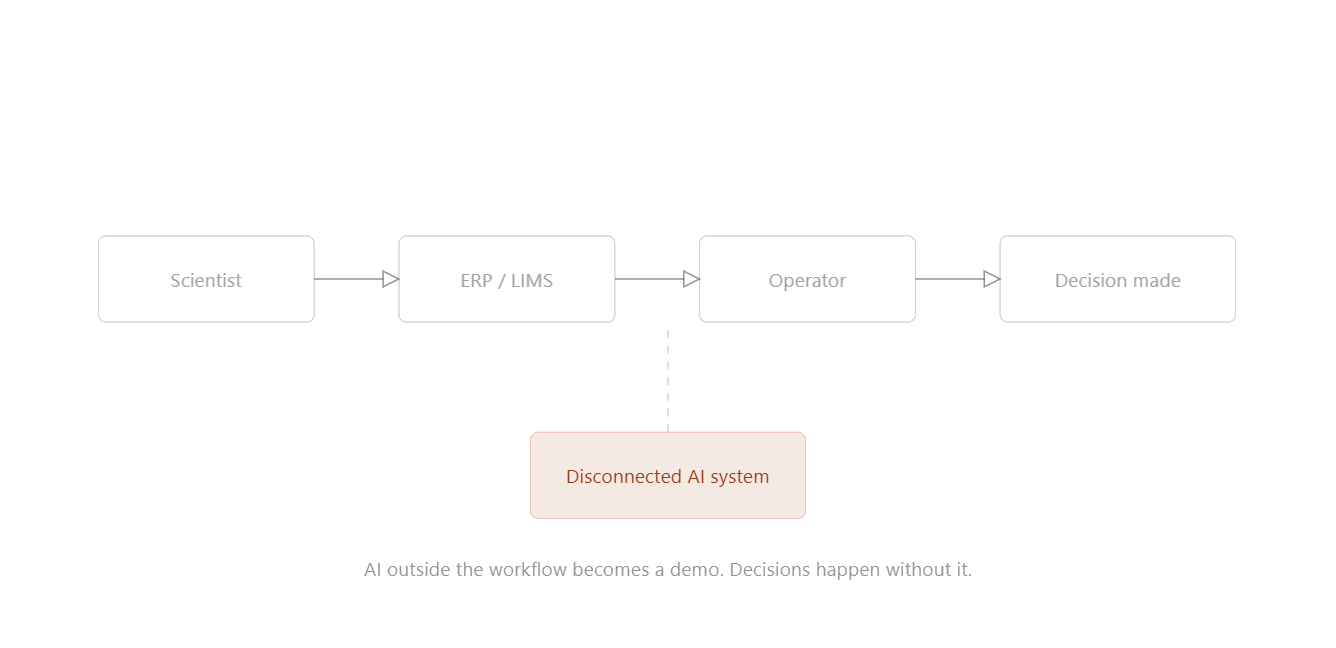

07. No integration into decision workflows

AI that sits outside existing workflows creates no real value. To create value, it must plug directly into how scientists, operators, and decision-makers already work.

Otherwise, they remain demos.

08. Organizational Rejection

Many AI projects don’t fail technically — they fail institutionally.

Innovation teams optimize for speed.

Compliance and IT optimize for control.

Without alignment, projects stall before reaching production. Change management plays an incredibly important role here, and you should be responsible for executing it.

Winning in regulated AI is not about building better models. It's about building systems that can survive validation, integration, regulatory constraints, and real-world volatility from day one.

.png)

.png)